Introduction

This research project is part of my Master’s program at the University of San Francisco, where I collaborated with the AT&T Alien Labs team. I would like to share a new approach to automate the extraction of key details from cybersecurity documents. The goal is to extract entities such as country of origin, industry targeted, and malware name.

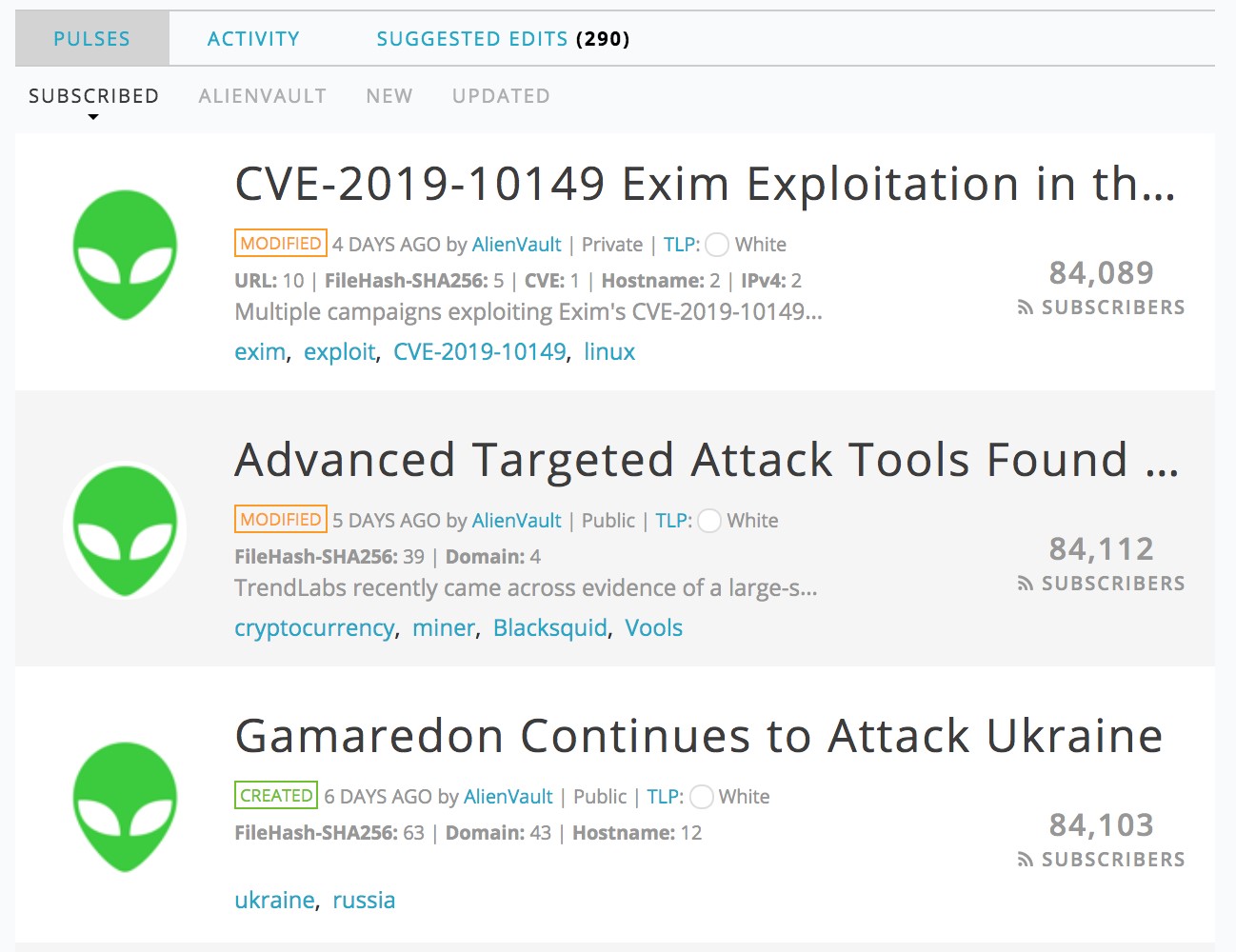

The data is obtained from the AlienVault Open Threat Exchange (OTX) platform:

Figure 1: The website otx.alienvault.com

The Open Threat Exchange is a crowd-sourced platform where, where users upload “pulses” which contain information about a recent cybersecurity threat. A pulse consists of indicators of compromise and links to blog posts, whitepapers, reports, etc. with details of the attack. The pulse normally contains a link to the full content (a blog post), together with key meta-data manually extracted from the full content (the malware family, target of the attack etc.).

Figure 2 is a screenshot of an example of a blog post that could be contained in a pulse:

Figure 2: Snippet of a blog post from “Internet of Termites” by AT&T Alien Labs

Figure 3 is a theoretical visualization of our end-goal - the automated extraction of meta-data from the blog post which can be added to a pulse:

Figure 3: The same paragraph with entities extracted

This kind of threat intelligence collection is still manual with a human having to read and tag the text. However, unsupervised machine learning techniques can be used to extract the information of interest. We created custom named entities trained on domain-specific data to tag pulses. This helps speed up the overall process of threat intelligence collection.

Approach and Modeling

We collected the data by scraping text from all the pulse reference links on the OTX platform. We focused on HTML and PDF sources and used appropriate document parsers. But, since the sources are not consistent, we put in place many rule-based checks to clean the text. For example, tags like ‘IP_ADDRESS’ and ‘SHA_256’ replace IP addresses and hashes. We did not omit them to preserve the word sequence and any dependencies. Next, we had the large task of annotating the documents. But SpaCy’s annotation tool, Prodigy, makes the process much less painful than it has been before.

Figure 4 below is an example annotation where “Windows” is labeled as a country rather than “China” in the sentence. The confidence score is very low for this annotation, and we can reject this annotation.

Figure 4: Example annotation from Prodigy

SpaCy's built-in Named Entity Recognition (NER) model was our first approach. The current model architecture is not published, but this video explains it in more detail. We have also built a custom bidirectional LSTM which has gained popularity in recent years. LSTM (or long-short term memory) models are very good at sequence labeling tasks like Named Entity Recognition. They are able to learn the sequence dependencies between words in a larger context. Thus, we are intentionally using a model that takes into account both directions of dependencies within a sentence.

Figure 5 is a diagram of the model architecture we built:

Figure 5: An overview of the extraction architecture

Results and Conclusion

Figure 6: An example batch training session for our country model using SpaCy

SpaCy’s robust NER model significantly outperforms our custom LSTM model. Yet, both models are better at recognizing countries and industries rather than malware names. We believe both models are overfit to our training data and don’t generalize well. So, we want to expand the training set by adding more domain-specific text like cybersecurity blogs and whitepapers in the future.