This is part 2 of a 3 blog series. You can also read part 1 and part 3

The Foundation of a Zero Trust Architecture (ZTA) talked about the guiding principles, or tenets of Zero Trust. One of the tenets mentions how all network flows are to be authenticated before being processed and access is determined by dynamic policy. A network that is intended to never trust, and to always verify all connections requires technology that can determine confidence and authorize connections and provide that future transactions remain valid. The heart of any ZTA is an authorization core involving equipment within the control plane of the network that determines this confidence and continually evaluates confidence for every request. Given that this authorization core is part of a control plane, it needs to be logically separated from the portion of the network used for application data traffic (the data plane).

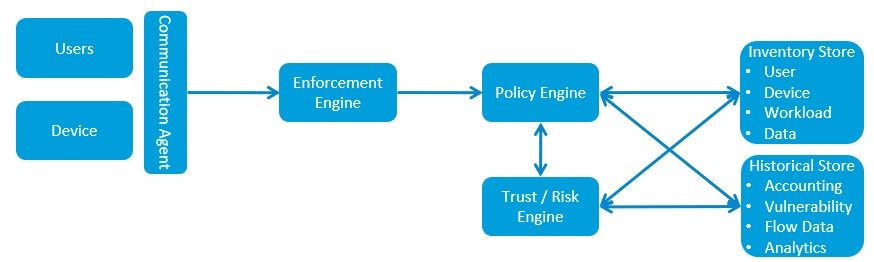

Based on the designed ZTA and the overall approach, components of the authorization core may be combined into one solution or completely stand on its own through individual hardware and/or software-based solutions.

- Communication Agent – the source of the access should provide enough information for confidence to be calculated. Enhanced identity attributes such as user and asset status, location, authentication method and trust scoring should be included in every communication so that it can be properly evaluated.

- Enforcement Engine – also known as an Enforcement Point. This should be placed as close to the element of protection (the data) as possible. You might think of this as the data’s bodyguard. The Enforcement Engine will authorize the requested communication based on policy and continually monitor the traffic to stop it, if necessary, as requested by the Policy Engine. An Enforcement Engine may prevent a system holding the element of protection from being discoverable, for example.

- Policy Engine – makes the ultimate decision to grant access to the asset and informs the Enforcement Engine. The policy rules will depend on the implemented technology but will typically involve the who, what, when, where, why and how for access involving network services, endpoint and data classes.

- Trust/Risk Engine – analyzes the risk of a request or action. The Trust/Risk Engine informs the policy engine of deviations in an implemented trust algorithm, evaluates the communication agent’s data against data stores and can utilize static rules and machine learning to continually update agent scores as well as component scores within the agent. A trust algorithm that is implemented to compute a score-based confidence level based on criteria, values and weights set by the enterprise, along with a contextual view of an agent’s history and other data provides the best and most comprehensive approach to eliminating threats. A score and contextual-based trust algorithm will identify an attack that may stay within a user’s role, versus an algorithm that does not take historical and other user data into account. For example, a score and contextual-based trust algorithm may pick up on a user account or role that is accessing data outside normal business hours in an unusual way or from an unrecognizable location. An alternative algorithm that relies solely on a specific set of qualified attributes may evaluate faster but will not have the historical context to understand that that access request seems odd and advise the policy engine to require better authentication before proceeding.

- Data Stores –As stated, a preferred approach is to implement a score and contextual-based trust algorithm. Therefore, Trust/Risk and Policy Engines must reference a set of stored data in order to make policy decisions on access requests or changes in communication behavior. An inventory store that contains various elements related to users, devices, workloads, and classified data correlated with the historical data of these elements and behavior analytics will inform the decision makers to make the appropriate access decision.

A ZTA can be implemented in various ways depending on an organization’s use case, business flows and risk profile, and the ZTA’s authorization core design may differ depending on the business function of the network. An agent model similar to the diagram shown above, with an agent on the data resource may be sufficient for on-premise client-to-server communications, for example. But, in a cloud environment it may not be practical to place an Enforcement Engine on every data resource within the Virtual Private Cloud (VPC). In this case, a resource group may be created through micro-segmentation from equal data assets under the same classification, with a gateway handling the policy enforcement.

A roadmap or plan of action that is developed, combined with a maturity assessment determining the results of where the company currently stands in meeting Zero Trust will help guide the business in making further investments in authorization core technology to fill in the gaps. Investments in a particular vendor that have historically worked well for the business may include that same vendor when evaluating supplemental technologies. Given the fact that open standards do not yet exist with respect to key portions of the authorization core, such as the data elements provided by the Communication Agent, scoring, and others, it is important to carefully evaluate vendor solutions that fill the voids discovered during the maturity assessment. The trust algorithm is a key component that may be supported by one vendor’s solution, but not another’s, for example, or the policy composition of one vendor’s solution may be different than others. And without specific standards in this arena, the business may be locked into a vendor given the extreme migration cost switching to another solution. The evaluation of any solution should take a holistic view of not only the vendor’s solution, but also the vendor’s business, how the solution links with other components in the architecture, longevity, and how the solution can dynamically scale to offset increased load.